The process aligns a source image to a reference image. Its algorithm automatically detects and minimizes misalignments between features using rigid-body transformation. The results are GeoTIFF files of matching optical assets with improved positional accuracy.

Coregistration can be used as a preprocessing step before change detection, data fusion, and DEM generation.

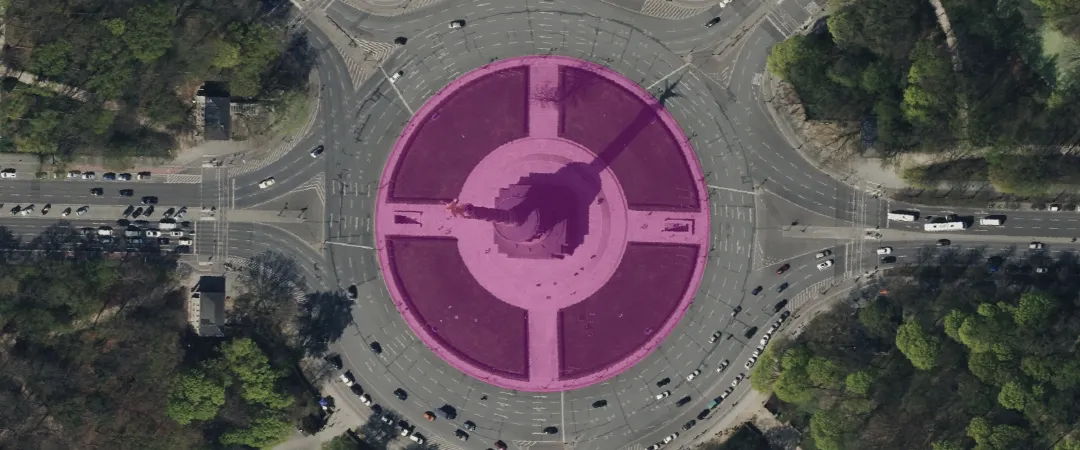

Examples of input images:

The results are the following:

- A GeoTIFF of the coregistered source image

- A GeoTIFF floating point displacement map of calculated misalignment after coregistration

- A GeoTIFF heatmap for displacement, with warmer colors showing greater misalignments

A floating point displacement map and a heatmap won’t be generated in case of too few tie points in the input files.

| Specification | Description |

|---|---|

| Provider | Simularity |

| Process type | Enhancement from 50 credits per km2 |

The coregistration algorithm aligns images accurately, even if they have different resolutions or sensor types. It can handle clouds and land cover changes. It calculates sub-pixel shifts by analyzing local image patches, then aligns the images using rigid-body translation and rotation.

To get the best results, make sure that:

- Both data items have matching geometric and radiometric processing levels.

- The GSD of the two data items doesn’t differ by more than 25%.

Input data items must come from a CNAM-supported collection and be added to storage in 2023 or later.

| Criteria | Requirement |

|---|---|

Product type | Input data items must come from a multispectral collection. |

| Processing level | Input data items must be georectified or orthorectified. |

| Spectral bands | |

| Geometry | The geometries of input data items must overlap by at least 20%. |

| Cloud coverage | The cloud coverage of input data items must be less than 25%. |

Use the coregistration-simularity name ID for the processing API.

{ "inputs": { "title": "Coregistering imagery over Berlin", "sourceItem": "https://api.up42.com/v2/assets/stac/collections/21c0b14e-3434-4675-98d1-f225507ded99/items/23e4567-e89b-12d3-a456-426614174000", "referenceItem": "https://api.up42.com/v2/assets/stac/collections/21c0b14e-3434-4675-98d1-f225507ded99/items/edeb6310-ea9a-4d8e-943d-11a5f3757824" }}| Parameter | Overview |

|---|---|

inputs.title | string | required The title of the output data item. |

inputs.sourceItem | string | required The absolute API path to the source data item. |

inputs.referenceItem | string | required The absolute API path to the reference data item. The positional accuracy of the source image will be improved against this reference. |